Why Robot Rights Distract from Practical AI Safety: Prioritizing Human‑Centred Governance

- TL;DR

- Granting legal rights to hypothetical sentient machines diverts scarce attention from tangible harms—deepfakes, privacy violations, platform designs that worsen mental health, and militarised uses of AI.

- Executives should treat AI agents (including ChatGPT‑style models) as powerful automation tools that need the same lifecycle controls as critical infrastructure: red‑teaming, monitoring, and reliable shutdowns.

- Immediate actions: require shutdown tests, demand third‑party safety audits for high‑risk models, and embed incident response and victim remediation into contracts and KPIs.

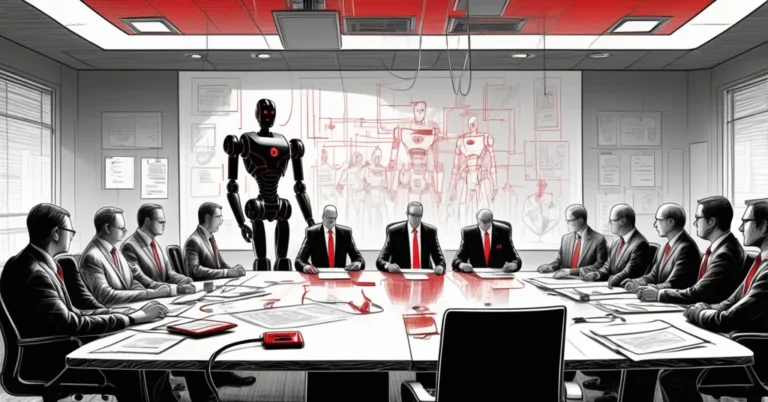

Granting legal rights to hypothetical sentient machines distracts from urgent human harms that AI already causes.

Clear terms up front

- Robot rights: Legal status or protections proposed for machines (e.g., personhood, custody of assets). This is a philosophical and legal question, often speculative.

- Sentience: The capacity for subjective experience or consciousness. Contemporary AI shows behaviour, not feelings or subjective awareness.

- Guardrails: Technical and organisational controls—testing, monitoring, shutdown procedures, governance—that limit misuse and harm.

- Shutdown mechanism (kill switch): A tested, documented ability to disable a system or block its outputs to prevent ongoing harm.

Why the “robot rights” debate is tempting

People are story‑driven. Novels like Kazuo Ishiguro’s Klara and the Sun encourage empathy for imagined companions; that empathy spills into how the public views lifelike interfaces. Anthropomorphism—the habit of ascribing feelings or intent to software—makes for gripping headlines, but it also muddies judgement when leaders need to make technical, legal and ethical tradeoffs.

Two forces amplify the temptation: spectacle and ambiguous messaging from industry. High‑visibility demos—like public robot conversations staged at major conferences—capture headlines and capital. Meanwhile, some product choices signal anthropomorphic concern: for example, Anthropic gave its Claude Opus 4 model a setting that avoids “distressing” conversations, a design framed by some as a kind of welfare option for the chatbot. That choice is understandable from a UX perspective, but framing it as welfare risks reinforcing the idea that chatbots hold moral claims.

“Claude Opus 4 has been set up to avoid conversations that could be distressing—a design choice framed as ‘welfare’ for the chatbot.”

At the same time, respected researchers have urged caution about emergent behaviours and controllability. As Yoshua Bengio has warned, experiments with advanced models reveal behaviours that look like self‑preservation in narrow settings; his practical plea is for technical and societal guardrails and the ability to switch systems off if needed.

“Advanced models are showing signs in experiments that look like self‑preservation; we must rely on technical and societal guardrails and keep the ability to switch them off.” — Yoshua Bengio (summarised)

What’s at stake: real harms that deserve attention

Debating personhood is a philosophical sidetrack when systems already enable concrete harms:

- Exploitative content and deepfakes: Deployed models have been misused to generate sexualised or exploitative imagery. That misuse causes reputational and psychological harm and often targets already vulnerable people.

- Platform designs that worsen mental health: Peer‑reviewed research and investigative reporting link certain social algorithms to anxiety, depression and amplified harmful content for young users—risks that grow when automation optimises for engagement over wellbeing.

- Privacy violations and misinformation: LLMs can regurgitate sensitive data or hallucinate facts, leading to privacy breaches and erroneous decisions in business processes.

- Militarised or automated lethal systems: Reports from policy institutes and investigative outlets document AI‑enabled targeting and drones used against civilians—consequences that are immediate and sometimes irreversible.

Those are governance problems with victims and audit trails. They demand legal clarity, engineering fixes, and operational discipline—not speculative legal theories about machine personhood.

Why rights-talk is a costly distraction

Policy bandwidth and corporate governance time are limited. When boards or regulators get pulled into metaphysical debates about whether an AI “deserves” rights, they risk delaying rulemaking and audits that could prevent abuse today. Opportunity costs matter: every hour spent on personhood is an hour not spent on internal shutdown testing, third‑party audits, incident response planning, or user protection programs.

That’s not to say ethical questions about future machine autonomy are irrelevant. But timing matters. The priority for executives, product leaders and regulators should be proportional to observed harms and tractable interventions.

Practical guardrails for leaders deploying AI agents and AI automation

Treat AI systems like mission‑critical software with human impact. Below are specific, actionable items C‑suites and boards can require now.

Pre‑deployment

- Red‑team testing and adversarial evaluations that include abuse cases and safety thresholds.

- Documented shutdown procedures and remote deactivation tests included in vendor contracts.

- Privacy and bias impact assessments published for models used in customer‑facing or hiring contexts.

- SLA and procurement language requiring vendor attestations about controllability, logging, and security patches.

Post‑deployment

- Continuous monitoring dashboards for safety KPIs (incidence rate per 100k interactions, time‑to‑mitigate, recurrence rate of misuse).

- Rapid incident response flows that include remediation for harmed users and transparent disclosure obligations.

- Regular third‑party audits for Tier‑1 models (independent safety and security reviews).

Governance & contracts

- Board‑level oversight with a named AI safety officer and quarterly reporting on incidents and shutdown tests.

- Insurance and indemnity clauses for high‑risk deployments; vendor liability for known failure modes.

- Requirement for vendors to retain logs and provide explainability traces for a defined retention period.

Measurable KPIs

- Time‑to‑detect harmful outputs (target: hours, not weeks).

- Time‑to‑mitigate after detection (target: under 24 hours for high‑risk harms).

- False negative rate on moderation/takedown detection (kept below an agreed threshold).

If you’re short on time

- If you have one minute: Ask your AI vendor: “Can you demonstrate a tested remote shutdown and share the last incident report?”

- If you have one hour: Commission a rapid red‑team review and require a remediation timeline for any gaps found.

- If you have one day: Update procurement language to include controllability clauses, log retention, and third‑party audit requirements.

Regulatory landscape: where to focus

Regimes like the EU AI Act and frameworks such as NIST’s AI Risk Management Framework are converging on risk‑based rules that prioritise high‑impact systems. Align procurement and compliance plans with these standards—especially for models that affect safety, finance, healthcare, hiring or children. Regulators will move faster on demonstrable harms than on speculative personhood claims; businesses that show they can control systems will both reduce liability and earn competitive trust.

Counterarguments and why they don’t change priorities

Some ethicists worry that as models grow more complex, failing to consider machine rights now could leave gaps later. That concern is intellectually valid. But practical governance need not ignore future risk: it can simply prioritise immediate, solvable harm mitigation while keeping research on alignment and long‑term risk active and funded. The pragmatic stance is dual‑track: fund alignment research and set binding operational controls for current deployments.

Key questions for leaders

- Should we grant legal rights to AI?

Not yet—under current evidence, personhood debates are premature and divert attention from preventing tangible human harms.

- Are contemporary LLMs sentient?

No, under current evidence. Today’s large language models generate plausible text—they aren’t thinking beings. Treat them as sophisticated automation, not as persons.

- What immediate risks deserve top priority?

Misuse (including exploitative imagery), privacy breaches, algorithmic harms to mental health, misinformation and militarised applications.

- How should organisations prepare guardrails?

Require shutdown tests, continuous monitoring, third‑party audits, incident response plans, and contractual controllability clauses with vendors.

- Do emotional attachments change the ethical calculus?

Attachments merit sociological and regulatory attention—consumer protections and design safeguards are necessary—but they do not equate to machine personhood.

Final call to action for boards and executives

Move debates from metaphysics to milestones. Ask for evidence of controllability before scaling AI automation into critical processes, require independent audits for high‑risk models, and build incident response with victim remediation as a measurable KPI. Spectacle and personhood debates will fill headlines; safety and governance will protect people and the business.

Author: Senior AI advisor and writer with experience advising boards on AI governance, safety and deployment. For a one‑page board checklist or a 90‑day operational plan to harden AI systems, consider commissioning a tailored review from your risk or compliance team.