IREN Energizes 1.4 GW at Sweetwater — A Milestone for AI Infrastructure

Executive takeaway:

- Opportunity:

IREN has turned on 1.4 GW of grid‑connected power at Sweetwater, shortening the lead time for large AI deployments that need sustained GPU capacity. - Risk:

Energizing a site is an infrastructure milestone, not revenue — the company must convert power into racked GPUs, signed long‑duration contracts, and steady utilization. - Next step:

Watch for long‑duration compute contracts, utilization metrics, and progress on the remaining 600 MW to reach the planned 2 GW campus.

What happened

On May 1, IREN energized (connected to the electricity grid and powered up) Sweetwater 1 in Texas, bringing 1.4 gigawatts of site power online and completing an interconnection with ERCOT (the Texas grid operator). This is the first phase of a planned 2 GW Sweetwater campus delivered via phased construction so customers can get capacity sooner rather than waiting for the entire build‑out.

Why grid‑connected power is the gating factor for AI data centers

Large language models and modern AI workloads require sustained access to thousands of high‑performance GPUs. GPUs consume power continuously during training and large‑scale inference: dozens to hundreds of kilowatts per rack, which aggregates into tens or hundreds of megawatts for a single facility. At that scale, the limiting resource becomes reliable, large‑scale power and the speed with which providers can turn that power into usable, contracted GPU hours.

Phased energization shortens customer lead times. Think of it like lighting a runway in stages so aircraft can land earlier: bring the first blocks of capacity online, validate connectivity and cooling, then ramp customers into those bays while later phases are still under construction.

How energized megawatts become billable AI compute

- Power and interconnection: grid connection and substation capacity must be in place (what IREN just achieved at Sweetwater 1).

- Site commissioning: power, cooling, and network validated; basic rack space available.

- Hardware deployment: GPUs, servers, switches racked and configured.

- Network and latency testing: ensure interconnects meet model requirements and customer SLAs.

- Contracts and onboarding: customers sign long‑duration compute agreements or colocation contracts.

- Utilization ramp: customers move workloads into production and begin paying for committed capacity or metered usage.

Market reaction and valuation context

Investors welcomed the milestone. IREN shares jumped roughly 6.18% on May 1, closing at $45.66 on elevated volume and remaining above the 200‑day moving average. Analysts are optimistic but conditional: a Simply Wall St consensus‑style target is near $70.40, and some optimistic models project up to $8.7 billion in revenue by 2031 under best‑case assumptions. Those upside scenarios depend on execution — winning multi‑year compute contracts, keeping utilization high, and completing the remaining build‑out on schedule.

Risks and caveats executives should track

- Execution and capital intensity: Building to 2 GW requires heavy capex. Delays, cost overruns, or slower customer signings can dilute returns and pressure the balance sheet.

- Customer onboarding and sales cycles: Enterprise AI deals and hyperscaler relationships often take months of technical validation and contractual negotiation — energized racks don’t fill themselves.

- Utilization assumptions: Bullish revenue models assume high utilization and long‑duration contracts. Low utilization or commodity pricing pressure erodes margins.

- Grid and regulatory exposure: ERCOT has unique reliability dynamics and regulatory timelines; interconnection wins are necessary but not sufficient if operational constraints arise.

- Competition: Hyperscalers, large colocation providers, and other ex‑miner firms are all vying for the same grid capacity and enterprise customers.

- Sustainability and PPAs: Large AI sites will be scrutinized for energy sourcing. Securing renewables or credible offsets affects customer and investor appetite.

What CIOs evaluating AI capacity providers should ask

- Guaranteed power and SLAs: How many kilowatts are guaranteed per month, and what penalties apply if you don’t get that power?

- Time to production: After contract signature, what is the typical timeline to have racked, configured GPUs ready for production?

- Rack and GPU specs: Which GPU models and density (kW per rack) are supported? Can you bring your preferred hardware?

- Network and latency: What cross‑connect options, peering, and latency guarantees exist for your multi‑region architectures?

- Pricing and contract structure: Are there committed‑MW contracts, reserved GPU hours, or pay‑as‑you‑go options? How are overages billed?

- Redundancy and reliability: What are the N‑redundancy designs, PUE targets, and disaster recovery options?

- Energy sourcing: What percent of energy is renewable or contracted via PPAs, and what are the carbon reporting practices?

- Ramp milestones: Request clear milestones that tie energized MW to racked GPUs and to customer billable hours.

Investor checklist for miners pivoting to AI infrastructure

- Is grid interconnection secured? Energized MW and an ERCOT interconnection materially reduce deployment risk.

- Are there signed long‑duration compute contracts? Look for multi‑year deals or anchor customers that underwrite capex.

- Utilization cadence: Ask for target utilization curves and the assumptions behind revenue forecasts.

- Capex and funding plan: How will the remaining build‑out be funded and what is the expected dilution or leverage?

- Competitive positioning: How does the company compare on speed‑to‑power and pricing versus hyperscalers and established colo players?

- Management track record: Experience in data center commissioning, enterprise sales, and large power projects matters.

- Regulatory and grid risk: Monitor ERCOT policies, interconnection queue risks, and potential curtailment exposures.

- ESG and community impact: Are there credible renewable sourcing plans and community engagement to avoid permitting or PR setbacks?

Practical metrics to watch next

- Megawatts online vs. megawatts contracted

- Racked GPUs and GPU hours billed

- Utilization percentage of installed GPU capacity

- Average revenue per GPU hour

- Time from contract signature to production (weeks/months)

- Progress on the remaining 600 MW to reach 2 GW and related capex cadence

IREN secured ERCOT interconnection and energized Sweetwater 1 to reduce a key bottleneck: grid‑scale power that can be turned into predictable AI compute capacity.

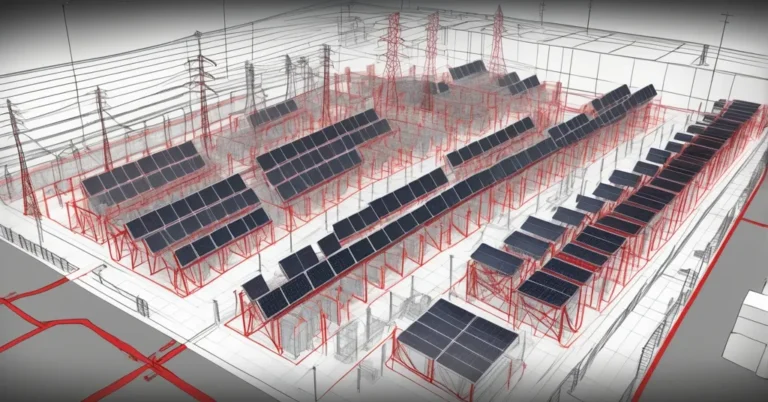

Visuals and analysis that add value

- Timeline graphic: Sweetwater phases (1.4 GW online → remaining 600 MW) with expected milestones.

- Simple flowchart: megawatts online → racked GPUs → contracted GPU hours → revenue.

- Compact table: market context (recent share move, 52‑week range, analyst target) to replace verbose market paragraphs.

Turning an energized site into a profitable AI cloud business is both straightforward and fiendishly hard: the engineering and grid hurdles are tangible and required, but winning predictable, long‑duration enterprise contracts and maintaining high utilization are the commercial tests. For CIOs, the energization of Sweetwater 1 signals additional supply to consider when planning large model training or sustained inference deployments. For investors, it’s a key milestone that de‑risks part of the build story — but not the whole monetization path.

If you want a ready‑to‑use tool, request an investor‑readiness checklist for miner‑to‑data‑center pivots or a utilization‑to‑revenue model for a 2 GW campus and it can be drafted to your specifications.