How to Build an Elastic Vector Database with Consistent Hashing, Sharding, and Live Ring Visualization for RAG Systems

TL;DR: Consistent hashing with virtual nodes (vnodes) lets you shard millions of embeddings across a cluster while moving only a small fraction of vectors when capacity changes. A compact Python simulator (SHA‑256 → u64 hashing, vnode-based ring, and live visualization) makes the trade-offs tangible: tune vnode count for balance, add replication for safety, and avoid full reindexing on every scale event. This approach reduces reindexing cost, improves operational predictability, and is a practical foundation for elastic vector stores powering RAG systems.

Why this matters for production vector DBs

Vector databases underpin recommendation engines, semantic search, and RAG (retrieval-augmented generation) pipelines. Embeddings can be millions or billions of vectors — rebuilding or redistributing them every time you scale is expensive: network I/O, index rebuild time, and query disruption add up. Consistent hashing with vnodes keeps most vectors on their current shard during topology changes, cutting reindexing scope and making capacity planning predictable. That translates directly to lower operational cost and less downtime for product teams and decision-makers.

- Business impact: fewer CPU-hours and network transfers during scaling events; faster recovery and predictable SLAs.

- Engineering wins: simpler rolling upgrades, less index rebuild, and smoother autoscaling for hybrid managed/on‑prem deployments.

- When to prototype vs managed: prototype if you need custom ANN integration, very fine-grained control, or heterogeneous hardware; consider managed vector DBs (Pinecone, Milvus, Weaviate) if you want operational simplicity.

How it works — the simple mechanics

At a high level:

- Consistent hashing maps items (embedding IDs or fingerprints) onto positions on a circular ring. Each storage node owns ranges on that ring.

- Virtual nodes (vnodes) are many small “seats” around the table assigned to each physical node; they reduce variance in how many keys each physical node receives.

- When nodes join or leave, only the keys mapped to the affected ring ranges move — typically a small fraction — avoiding full reindexing.

Simple metaphor: imagine a circular table with 2,000 chairs (vnodes). You assign those chairs to servers (physical nodes). When you add a server, it takes ownership of some chairs. Only guests sitting in those chairs must stand up and move — everyone else stays seated.

Key implementation details

- Deterministic hashing: The simulator uses SHA‑256, truncates the digest to 8 bytes, and interprets that as an unsigned 64‑bit integer to place vnode keys and look up vectors. Truncating to 8 bytes gives a uniform 64‑bit space with negligible collision probability for practical key counts, and it stays deterministic across languages if byte ordering is consistent.

- Lookup performance: Use a sorted array of vnode positions + binary search: O(log M) per lookup where M = total vnode count. For high throughput, shard lookups can be batched and cached.

- Vector generation for the demo: synthetic embeddings sampled from a normal distribution and L2‑normalized (dimension 256) so cosine similarity behavior aligns with production ANN systems.

“Consistent hashing with virtual nodes balances key placement and ensures only a small fraction of vectors move when nodes are added or removed.”

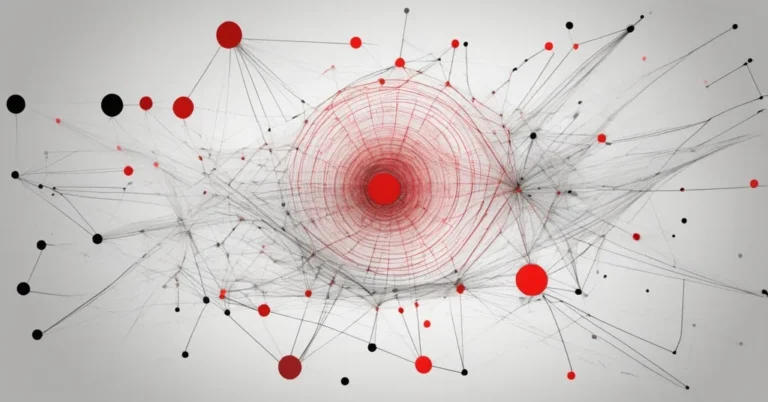

The simulator — what it shows and how to run it

The interactive Python simulator implements:

- ConsistentHashRing: vnode management (add/remove), sorted ring of u64 positions, and lookup.

- StorageNode dataclass: physical node metadata and vnode counts.

- VectorDBSimulator: generates N normalized embeddings (default N = 6,000, dim = 256, seed = 42), maps vectors to nodes, computes distribution, and computes movement_fraction when the ring changes.

- Live visualization using networkx + matplotlib and ipywidgets so you can add/remove nodes and change vnodes-per-node.

Example Python snippet for the hashing step (digest → u64):

import hashlib, struct

def sha256_to_u64(key_bytes):

h = hashlib.sha256(key_bytes).digest()

# take first 8 bytes, big-endian unsigned 64

return struct.unpack(">Q", h[:8])[0]Movement fraction is a straightforward metric:

def movement_fraction(before_map, after_map):

moved = sum(1 for k in before_map if before_map[k] != after_map[k])

return moved / len(before_map)Run the notebook in Colab or Jupyter. Dependencies include numpy, networkx, ipywidgets, matplotlib, and IPython.display. The full script and a “Run in Colab” badge are available in the repository for fork-and-run experimentation.

What the interactive demo makes tangible

Instead of abstract guarantees, the simulator lets you poke the ring and see:

- Histogram of keys owned per physical node (imbalance ratio).

- Which ring ranges moved after adding/removing a node (heatmap or ring overlay).

- How movement_fraction behaves as you vary vnodes-per-node.

Theoretical expectation: adding a new node to a cluster of C nodes will cause roughly 1/(C+1) of keys to remap to the new node’s ranges (so adding one node to a 4-node cluster moves ~20% of keys). Vnodes don’t change that global fraction, but they dramatically reduce variance in per-node counts and reduce the long-tail imbalance that can create hotspots.

Practical guidance:

- Start with ~80–200 vnodes per physical node for medium-sized clusters; increase if per-node key variance is still significant. The simulator defaults to 80 vnodes/node and allows 20–300 for experimentation.

- Measure imbalance ratio: max_keys/min_keys per physical node. Aim for ratios near 1.0–1.2 for steady performance.

- Run a batched test with a larger N (e.g., 1M vectors) to estimate actual data transfer and index rebuild time before making production changes.

Operational considerations and production patterns

Consistent hashing is a core building block, not a full production blueprint. The following covers common extensions and pitfalls for teams building elastic vector stores for RAG systems.

Replication and fault tolerance

- Simple approach: keep a replication factor R and store each vector on R distinct vnode successors. That increases read availability but multiplies storage and index build costs.

- Multi-ring / primary+replica: use separate rings for primaries and replicas so rebalancing primaries doesn’t force simultaneous replica churn.

- Ensure replication placement avoids correlated failures (rack/zone awareness).

Weighted nodes and heterogeneous hardware

Not all machines are equal. Weighted vnodes assign more virtual nodes to beefier servers so they take proportionally more keys. Implementation: scale the number of vnode entries per physical node by capacity weight. Add unit tests to ensure weight invariants hold across rebalance operations.

ANN integration, reindex cost, and query disruption

- ANN indexes (HNSW, IVF+PQ, FAISS) have different build times and memory characteristics. A rebalance that moves 10% of keys might still trigger long index builds on the receiving node.

- Mitigation patterns: incremental indexing, stream-based catch-up, temporary forwarding (serve queries from old node while building), or background rebuilds with warm caches.

- Monitor: index build latency, replication lag, and query latency spikes during rebalances.

Monitoring and SLOs

Instrument these metrics:

- movement_fraction per topology change

- imbalance ratio (max/min keys per node)

- index build time and throughput during rebalance

- query fanout and tail latency

Tuning rules of thumb

- Choose vnode count so total vnode entries M = vnode_per_node × node_count is comfortably larger than node_count — hundreds to thousands of vnodes across the cluster is common.

- If imbalance or hot keys persist, increase vnodes-per-node rather than micro‑optimizing hash function choices.

- Plan for the expected movement fraction: adding k nodes to C nodes will move roughly k/(C+k) of keys (so vertical scaling or adding many nodes at once increases movement significantly).

Alternatives and when to choose them

Rendezvous hashing (a.k.a. highest-random-weight) offers simpler weighting semantics and avoids a global sorted ring, but it requires computing a candidate score per node per lookup (costly if node count is large). Range partitioning offers predictable range ownership but is brittle under skew and scale. For large, elastic vector stores that value minimal reshuffle and even distribution, consistent hashing + vnodes is a robust choice.

Next experiments to make the simulator production-ready

- Add replication and simulate read availability under node loss.

- Implement weighted vnodes and validate imbalance ratios across heterogeneous clusters.

- Map vnode movement to ANN index rebuild cost: estimate network transfer and index build CPU seconds for HNSW/FAISS per moved vector.

- Scale tests: move from 6k vectors to 1M+ in batched mode to capture real-world I/O and timing.

Questions & answers

-

What is the primary technique used to balance vectors and minimize reshuffling?

Consistent hashing with virtual nodes (vnodes) balances key placement across physical nodes and ensures only a limited fraction of vectors move when nodes are added or removed.

-

How are hash positions generated for the ring?

A SHA‑256 digest is truncated to the first 8 bytes and converted to an unsigned 64‑bit integer to deterministically place vnode keys and map vectors to positions on the ring.

-

How does the simulator measure how much data moves after topology change?

Using movement_fraction(before, after) = number_of_keys_that_change_shard / total_keys, calculated on the mapped embeddings to quantify reshuffling.

-

What default demo settings illustrate behavior?

The demo uses 6,000 normalized embeddings (dim 256, seed 42) and an adjustable vnode-per-node parameter (default 80) so you can see distribution and movement in a tight, interactive loop.

-

Does consistent hashing validate the minimal data movement claim for elastic vector stores?

Yes. Both theory and the simulator show that only the keys mapped to affected ring ranges move on topology changes — the fraction is predictable (roughly 1/(C+1) when adding one node to C nodes) and tunable in practice via vnodes and operational patterns.

Key takeaways

- Consistent hashing + vnodes is an effective foundation for elastic vector stores because it limits reindexing and yields predictable movement fractions during scale events.

- Vnodes reduce per-node variance; replication and weighted vnode assignments handle availability and heterogeneous capacity respectively.

- Real production systems need ANN-aware reindexing strategies, careful monitoring, and planned rolling updates to avoid query disruption.

- Run the simulator, scale it to larger vector counts, and measure movement_fraction, index rebuild time, and network I/O to estimate real operational cost before committing to a design.

Tools and links for next steps: FAISS (https://github.com/facebookresearch/faiss), HNSWlib (https://github.com/nmslib/hnswlib), Pinecone (https://www.pinecone.io), Milvus (https://milvus.io), Weaviate (https://weaviate.io). Fork the simulator repo, run the interactive notebook, and use the experiments to convert an academic guarantee into an operational plan for your RAG pipeline.