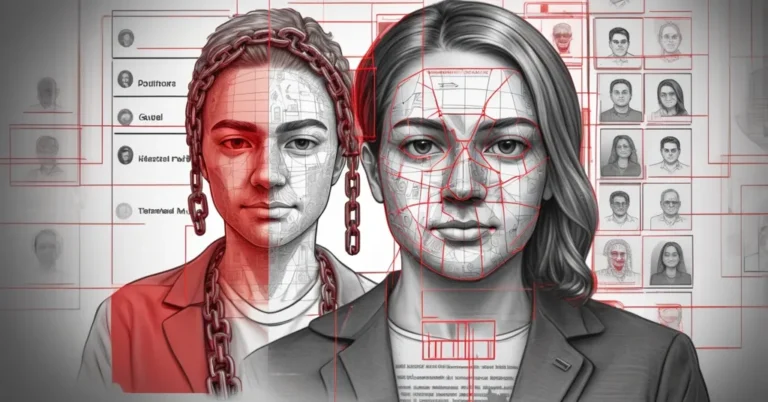

When intimate violence goes digital: what the Fernandes case reveals about deepfakes, identity abuse and platform responsibility

Trigger warning: this piece discusses sexualized abuse, impersonation and threats. If these subjects are difficult for you, please consider pausing or seeking support.

Business leaders, platform teams and legal counsels should pay attention: the Collien Fernandes–Christian Ulmen case is not just celebrity drama. It exposes how AI-generated deepfakes and online impersonation are reshaping intimate-partner violence, forcing companies and regulators to decide how to detect, respond to and legally classify digital sexual abuse.

Key definitions: what leaders need to know

Deepfake / AI-generated deepfake — synthetic images, audio or video created by machine learning that can convincingly portray a real person doing or saying things they never did.

Identity impersonation (online impersonation) — creating fake profiles, sending messages or posting content that appears to come from someone else, without necessarily using AI to synthesize new imagery.

Digital intimate-partner violence (digital sexual violence) — abusive behaviours by current or former partners carried out online: humiliation, distribution of sexual material, impersonation, doxxing or threats designed to control or punish.

What happened, and why it matters

Collien Fernandes, a public figure in Germany, has publicly accused her former partner Christian Ulmen of long-term digital abuse: fake profiles created in her name, men contacted as if by her, and sexualized images and videos circulated and presented as her. After a 2024 documentary exploring pornographic material attributed to her, Fernandes says Ulmen confessed; Ulmen denies the allegations. Fernandes filed a complaint in Spain — where she and her family had moved — citing stronger legal protections for digital and gender-based violence.

“It turned him on to humiliate me for years.” — Collien Fernandes

Her description of the abuse as “virtual rape” captures the emotional and sexualized harm survivors report, though the term sits uncomfortably with legal categories that still rely on physical acts. Ulmen’s lawyer countered publicly:

Ulmen had “never produced and/or distributed deepfake videos of Ms Fernandes or any other person.”

Those competing statements underscore the core problem: victims feel the same harm whether the material is a manipulated deepfake or fabricated impersonation. But law, platforms and remediation processes treat those scenarios differently.

How the technology works — and why detection is getting harder

Creating convincing deepfakes no longer requires specialist labs. Off-the-shelf tools, public datasets and cloud compute have made high-fidelity face and voice synthesis accessible to hobbyists and abusers. Voice cloning can match a person’s cadence from a few seconds of audio; face-swap models can paste a likeness into an existing video.

Detection used to rely on obvious artifacts — mismatched lighting, jittery mouth movements, odd blinks. As models improve, those artifacts vanish. Multimodal synthesis (combining video and voice) and small edits that reuse real footage make automated detection brittle. Platforms that rely solely on filters will miss well-crafted fakes. Human review helps, but scale and safety for moderators are real constraints.

Legal landscape: Spain, Germany and the problem of classification

Jurisdictions vary in whether they treat AI-generated imagery, identity impersonation or the distribution of sexualized material as distinct crimes. Spain has recently strengthened laws around gender-based violence and digital abuse, creating clearer pathways for complaints and protective measures. Germany, by contrast, still struggles to map identity-based online abuse and synthetic media to existing statutes, which complicates investigations and remedies.

That gap matters for victims and for organizations: choosing where to file — forum-shopping (choosing the country or court most likely to help) — becomes a practical consideration. It also shapes what platforms and law enforcement are asked to do: take down a video, trace an account, or treat a case as an intimate-partner offence with protective orders.

Legal clarity changes evidence requirements, possible penalties and the speed of protection. Until laws catch up, victims may face long waits for takedowns, inconsistent cross-border cooperation and uncertainty about whether the person responsible can be held to account.

Public reaction, politics and the costs of visibility

The case prompted demonstrations across German cities and a polarized social-media debate reminiscent of other celebrity controversies. Protesters rallied behind Fernandes, who reportedly faced death threats and felt compelled to wear a bulletproof vest at a public demonstration. High visibility pushes the conversation into the open, but it doesn’t guarantee faster legal remedies for the many non-celebrities experiencing similar abuse.

Political reframing also distracts from practical fixes. Some voices attempted to recast the discussion through unrelated political angles rather than address gaps in legal protection and platform practices. That diversion slows the policy momentum needed to protect people affected by digital sexual violence.

Platform responsibility and limits

Platforms sit at the intersection: they host content, enable distribution and often are the first line of response. Practical constraints and trade-offs include:

- Automated detection has false positives and false negatives. High-fidelity fakes are harder to detect without false alarms that impact legitimate speech.

- Human moderation scales poorly and exposes moderators to trauma.

- Cross-border legal requests are slow; platforms often lack standardized fast-tracks for urgent cases involving safety or sexualized threat.

- Identity verification tools can reduce impersonation but raise privacy and accessibility concerns.

Platforms must balance speed, accuracy and rights preservation while providing clearer reporting flows and specialist escalation lanes for digital sexual violence.

What business leaders should do — 5-step corporate response

- Create an incident playbook — include steps for detection, reporting, takedown requests, legal escalation and internal communications that respect survivor privacy.

- Invest in detection + human review — combine automated tools that flag likely synthetic media with trained teams for high-sensitivity cases.

- Build cross-border legal readiness — map key jurisdictions (laws, contact points, evidence standards) and pre-establish law-enforcement and counsel contacts.

- Support victims — offer remediation services, identity-repair guidance and a clear, confidential reporting channel for employees and customers.

- Publish transparent policies — explain what constitutes impersonation, the takedown process and expected timelines; report on enforcement in transparency reports.

Frequently asked questions

Does it matter legally whether an image is a deepfake or a staged impersonation?

Yes. Legal classification drives evidence rules, applicable statutes and remedies. The survivor’s experience can be identical, but courts and platforms treat the origins of content differently.

Can platforms reliably detect every deepfake?

Not reliably. Detection tools are improving but are not foolproof. Faster, higher-fidelity synthesis and reuse of real footage reduce detection rates, so human-led escalation and contextual investigation remain essential.

Should victims always pursue legal action in another country?

Not always. Filing abroad can be effective where protections are clearer, but cross-border cases add complexity, cost and emotional burden. Legal counsel should weigh protections, evidence needs and practical enforceability.

What role should regulators play?

Regulators should clarify definitions of digital sexual violence, mandate reasonable platform processes for urgent takedowns and incentivize interoperable cross-border law-enforcement cooperation. The EU’s recent digital safety frameworks may be a model, but specifics matter.

Policy directions and next steps

Three policy priorities deserve immediate attention. First, harmonize legal definitions so that identity abuse and AI-generated synthetic media are not gaps victims slip through. Second, mandate faster platform escalation lanes for sexualized threats and impersonation cases that threaten safety. Third, fund research into detection that privileges safety and accuracy while protecting civil liberties.

Industry must act too. Companies building generative AI and platforms hosting user content should fund independent audits of misuse risks, support rapid takedown mechanisms, and publish transparency reports that show how they handle impersonation and synthetic media complaints.

“The tools that make such abuse possible are no longer exceptional. They are ordinary.” — Fatma Aydemir

That ordinariness is the hard business problem: technologies that democratize creation also democratize harm. Boards, CTOs and general counsels must treat digital sexual violence as a material risk—both reputational and legal—and prepare practical systems to reduce harm.

Action now reduces future cost. Start with clearer policies, better cross-border preparation and survivor-centred incident response. Platforms and regulators will need to move faster than they have; otherwise the legal gaps revealed by the Fernandes case will continue to leave victims navigating an inconsistent, fragile system while abusers exploit readily available tools.

Who should act today? Platform policy teams, CTOs, HR and legal counsels. Make the playbook, test it with real scenarios, and ensure channels exist for rapid, humane response when digital intimate-partner violence occurs.